Title

Create new category

Edit page index title

Edit category

Edit link

Installing Google Kubernetes Engine (GKE)

Setup Google Kubernetes Engine in Google Cloud Console

Prerequisites

The following prerequisites are necessary for deploying Infoworks on Google Cloud Kubernetes Engine.

A Google account is required to work with GCP. Sign up for a Google account, if you do not have one.

Ensure that you have a Google project with a billing account.

Ensure that there is an adequate quota limit for CPU cores for the machine types you want to use in your GKE.

Following permissions are required for the user to setup the Kubernetes cluster in Google Cloud.

- Google Compute Admin

- API

- Service Account Usage

- Kubernetes Engine Admin

Enable APIs for the Kubernetes Engine and Compute Engine. This can be done by clicking the Navigation menu > APIs and Services menu of your GCP console.

Ensure you create a VPC in a dedicated project or shared project to setup GKE.

- Setup a bastion host (VM) for security best practices to connect and communicate with Kubernetes cluster and its operations. Ensure the bastion host belongs to the same VPC/Subnet similar to Kubernetes Cluster.

- Setup Cloud NAT under the VPC network of GKE. Cloud NAT enables internet connection for the private subnets under the GKE cluster. Refer to the official Cloud NAT documentation.

Kubernetes Cluster

To set up GKE Kubernetes cluster, refer to the official Google Documentation.

| Category | Infoworks Recommendation | Notes |

|---|---|---|

| Private Cluster | Yes | Create a GKE cluster with private access within the VPC. Security Best Practice |

| Public Cluster | No | Infoworks supports public cluster but doesn’t recommended. |

| Zonal/Regional Cluster | Regional | The Regional Cluster is used for GKE Masternode HA on different zones. Whereas, Zonal Cluster is dedicated to a single zone and a single point of failure. |

| NAT | Yes | GKE requires internet connection to download the images. |

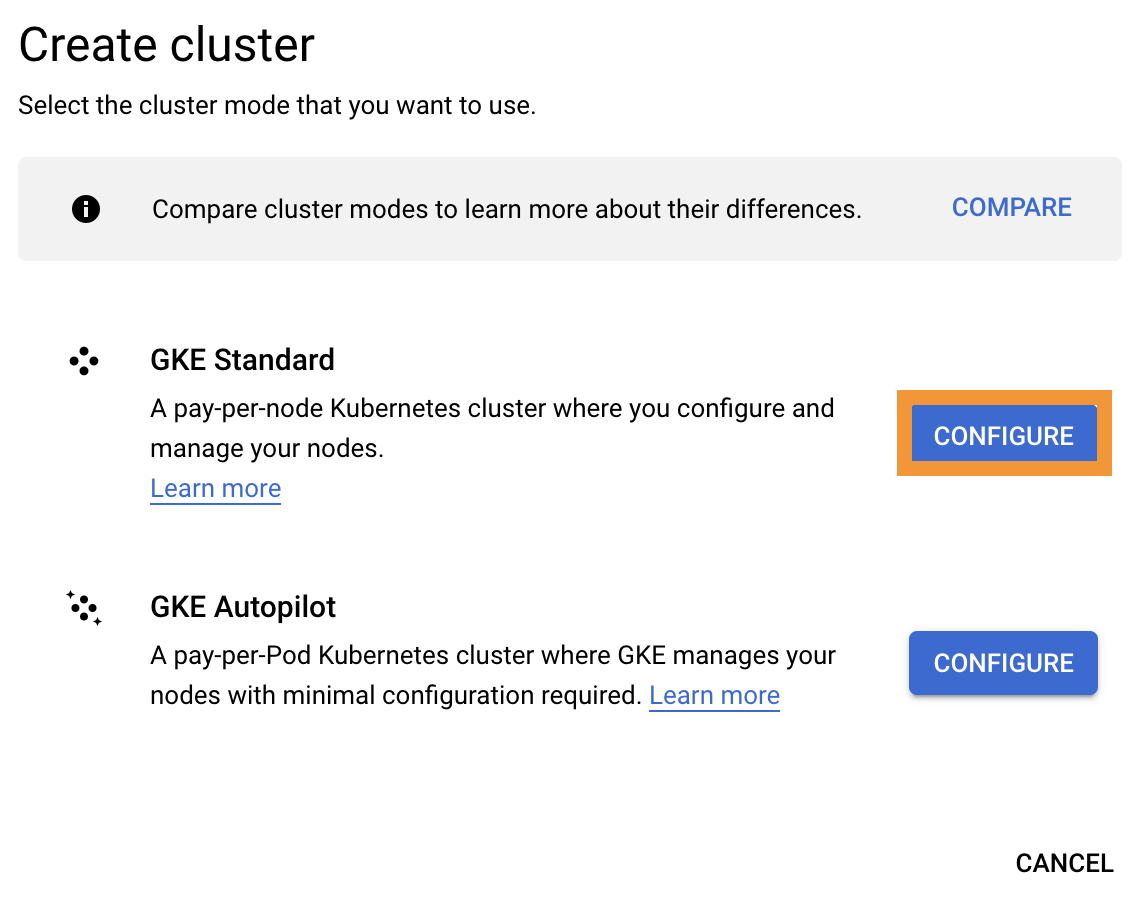

Setup GKE

Step 1: To set up GKE, navigate to Google Cloud Console > Kubernetes Engine > Clusters.

Step 2: Click Configure.

Cluster Basics

This section provides basic information about your cluster.

Enter the required details for the following mandatory fields:

- Name

- Location type (Zonal/Regional)

- Control Plane Version

| Name | Name of the cluster |

|---|---|

| Location (Zonal/Region) | Regional and specify the zones to launch the nodes GKE control plane and data plane |

| Control plane version | Kubernetes version to be installed on the nodes (GKE control plane and data plane). See prerequisites for the k8s version support. |

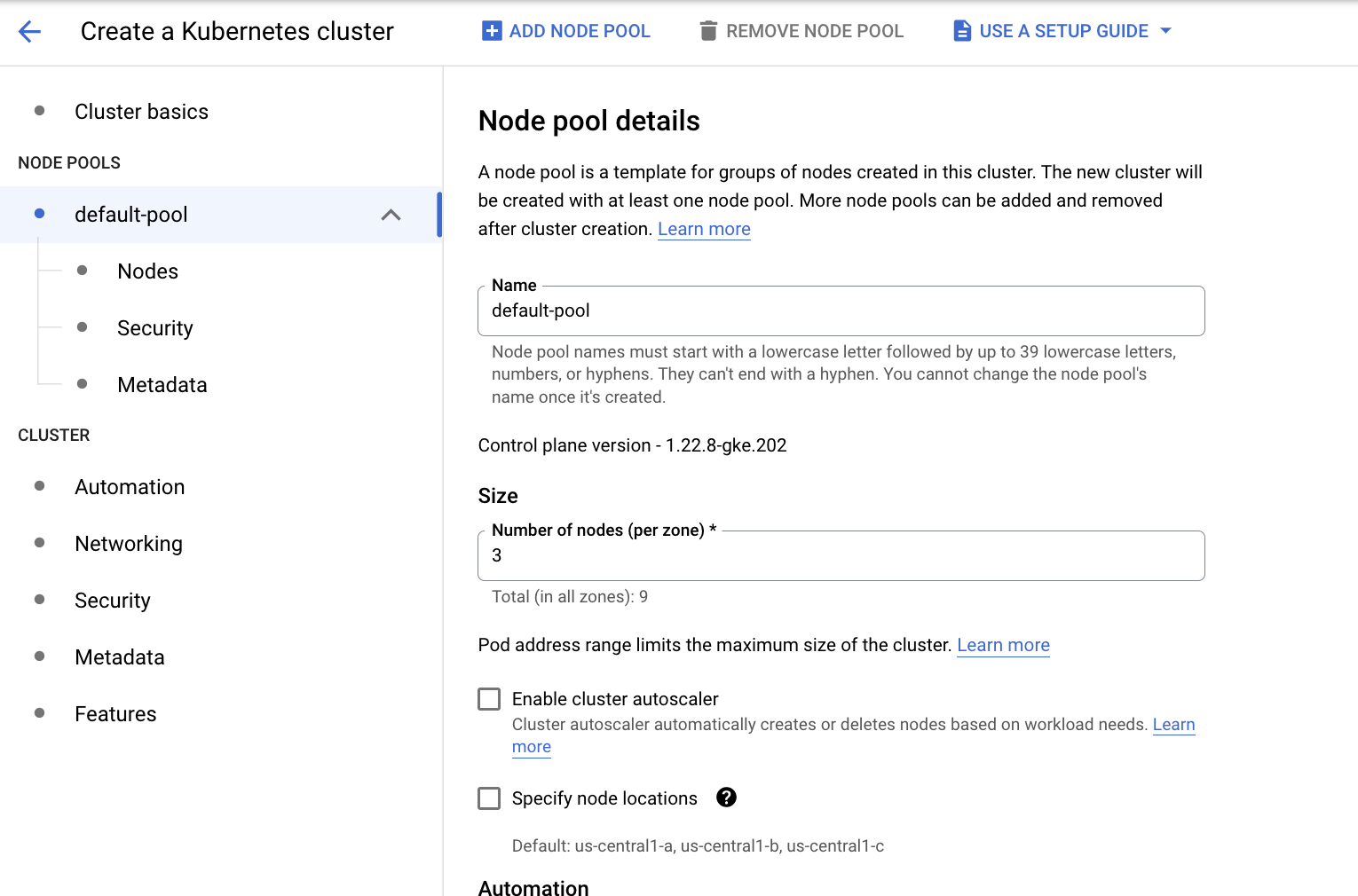

Node Pools

A node pool is a template for groups of nodes created in this cluster. The new cluster will be created with at least one node pool. More node pools can be added and removed after cluster creation.

Enter the required details for the following mandatory fields:

- Name

- Size

- Enable Cluster Autoscaler checkbox

- Image

- Machine Configuration

- Misc options

| Field Name | Description |

|---|---|

| Name | Name of the node pool. User can create multiple node pools by clicking Add Node Pool. |

| Size | Number of nodes (VM’s) per zone. Select the data plane nodes on selective zones. |

| Enable Cluster Autoscaler | Enable autoscaling for the nodes based on the workloads the node pool is handling |

| Image | GKE Node image. Select the default cos_containerd image |

| Machine Configuration | N2-standard-8 (8 vCPU and 32 GB RAM). Minimum nodes required - 2 |

| Misc options | Select based on the security and requirements |

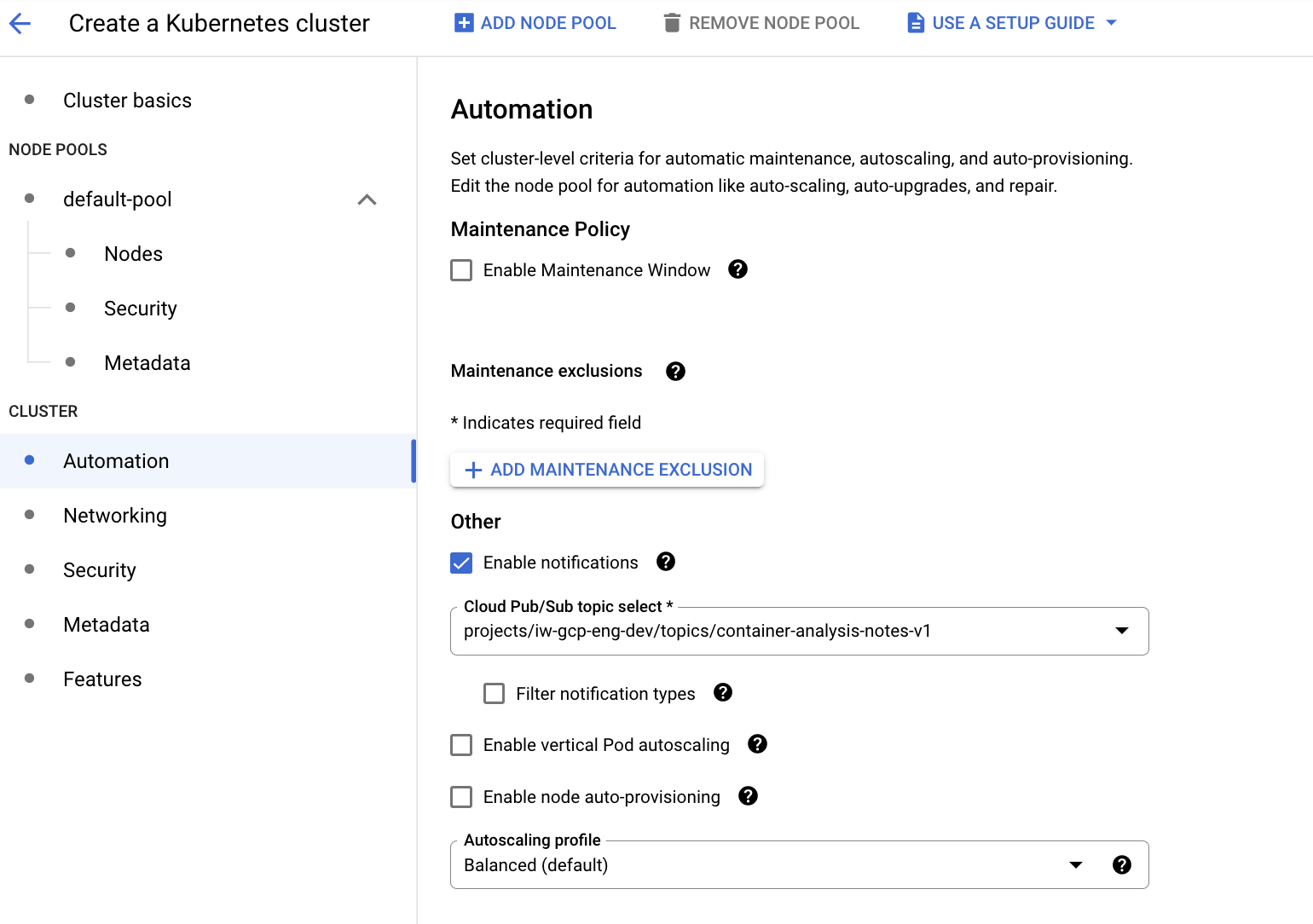

Cluster Automation

Cluster Automation section is used to set few other admin operations:

- Maintenance window for the cluster level operations to make sure the is no down time except the maintenance window period.

- Notifications - GKE publishes notifications to Pub/Sub, providing you with a channel to receive pertinent information from GKE about your clusters.

- Vertical Pod Autoscaling - Vertical Pod autoscaling automatically analyzes and adjusts your containers' CPU requests and memory requests.

- Node Auto Provisioning - Node auto-provisioning automatically manages a set of node pools on the user's behalf. Without node auto-provisioning, GKE considers starting new nodes only from the set of user created node pools. With node auto-provisioning, new node pools can be created and deleted automatically.

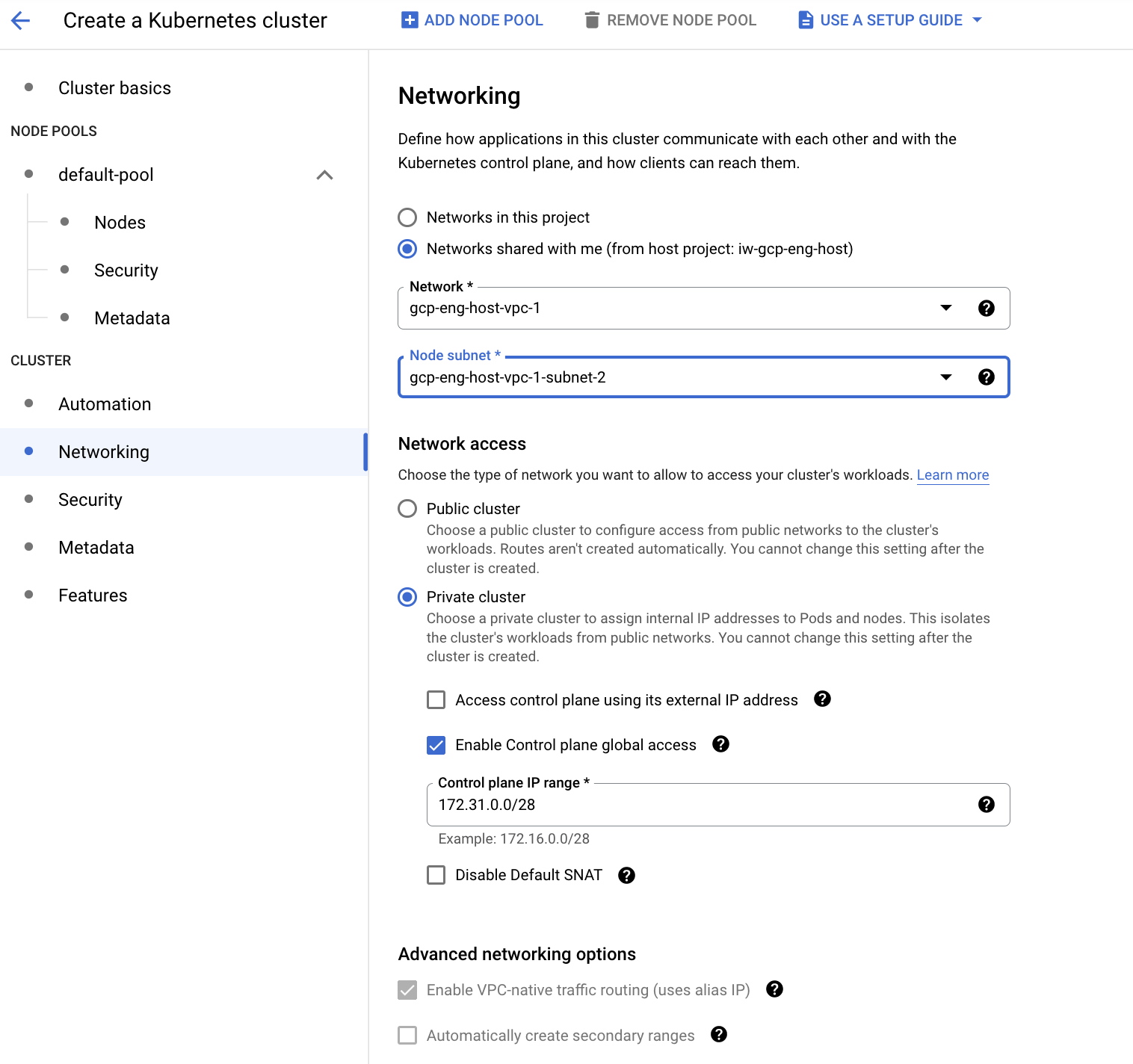

Cluster Networking

Cluster Networking in the below mentioned scenario describes securing the GKE cluster privately without exposing it to the outside world.

| Category | Description |

|---|---|

| Network (VPC) | Infoworks supports both dedicated project and shared project VPC. |

| Network Access (Public/Private) GKE Dataplane | Choose private/public cluster. Infoworks recommends choosing the private cluster for security best standards |

| GKE Control plane access Public | User can choose the option access control plane using external ip address, if the GKE control plane endpoint should be accessible public. Infoworks recommends disabling the option for security best standards and connect via bastion host for better security over private connection end point. |

| GKE Control plan global access | With control plane global access, you can access the control plane's private endpoint from any GCP region or CDW/data lake environment no matter what the private cluster's region is. |

| Control Plane IP Range | IP Range for the control plane with /28 subnet for the GKE masternode endpoint address. |

| Misc options | Enable/Disable the options based on the security and requirements |

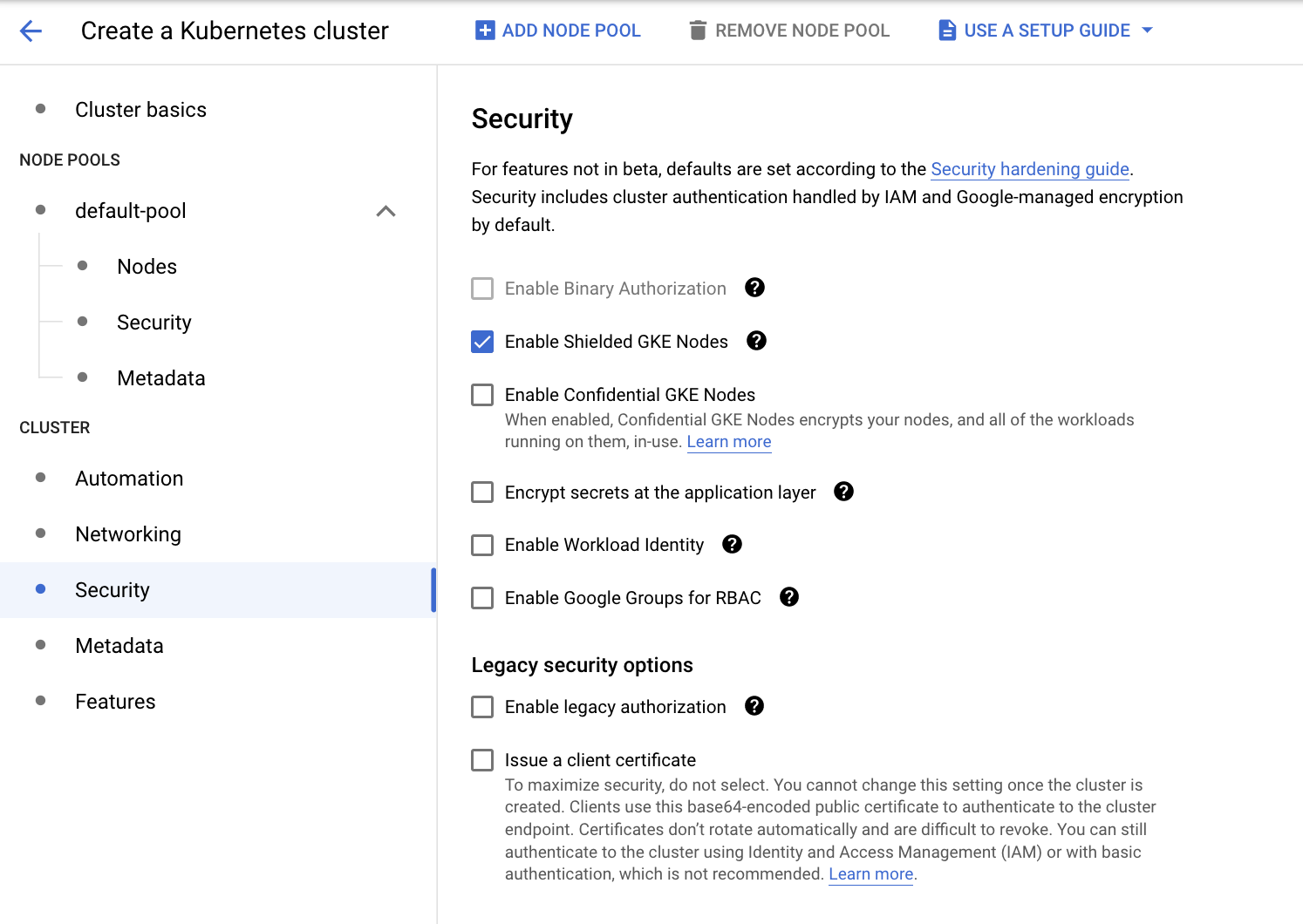

Cluster Security

GKE protects clusters by using many layers of the stack, including the contents of your container image, the container runtime, the cluster network, and access to the cluster API server.

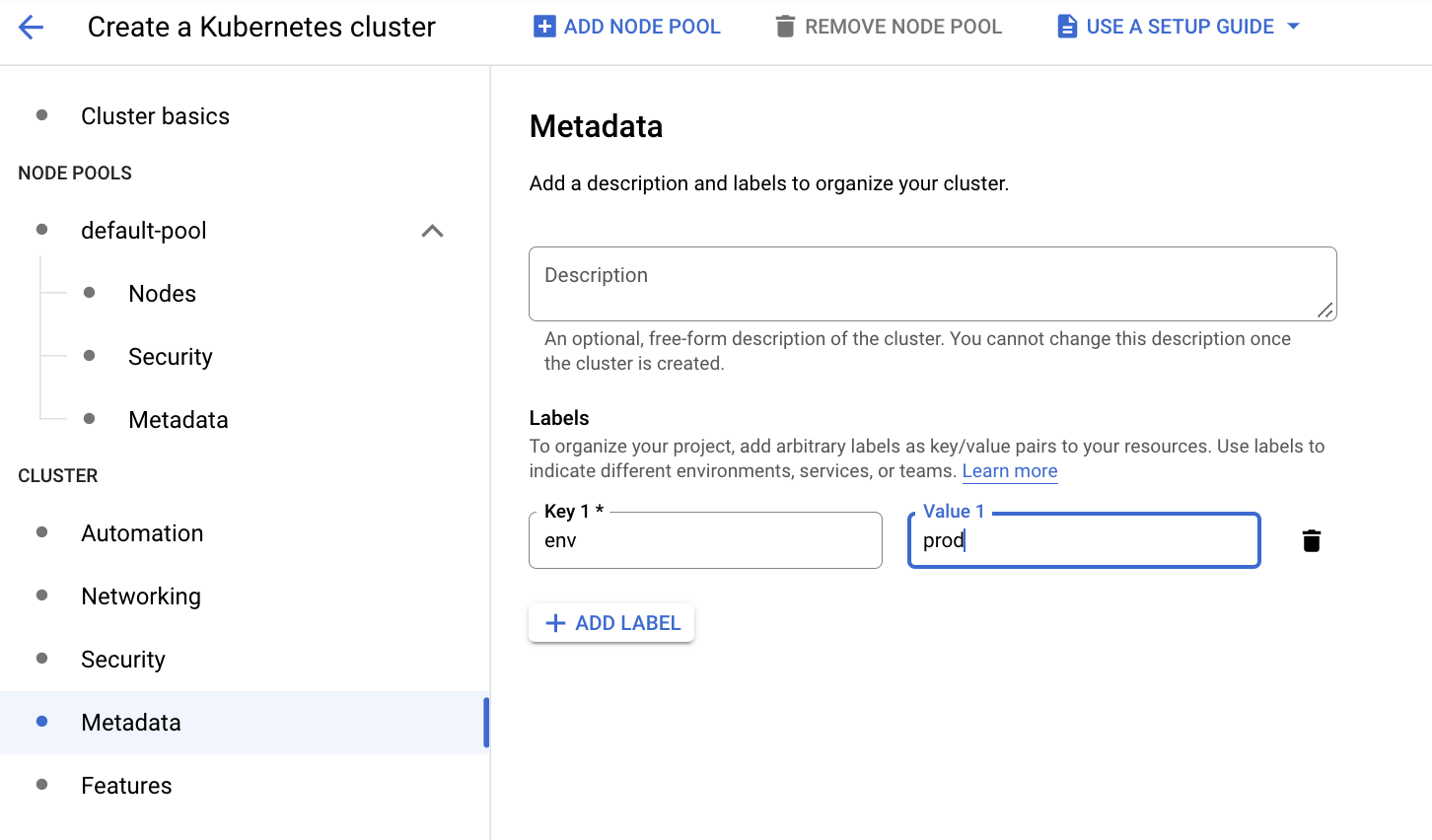

Cluster Metadata

This section describes the labels and metadata information of GKE cluster.

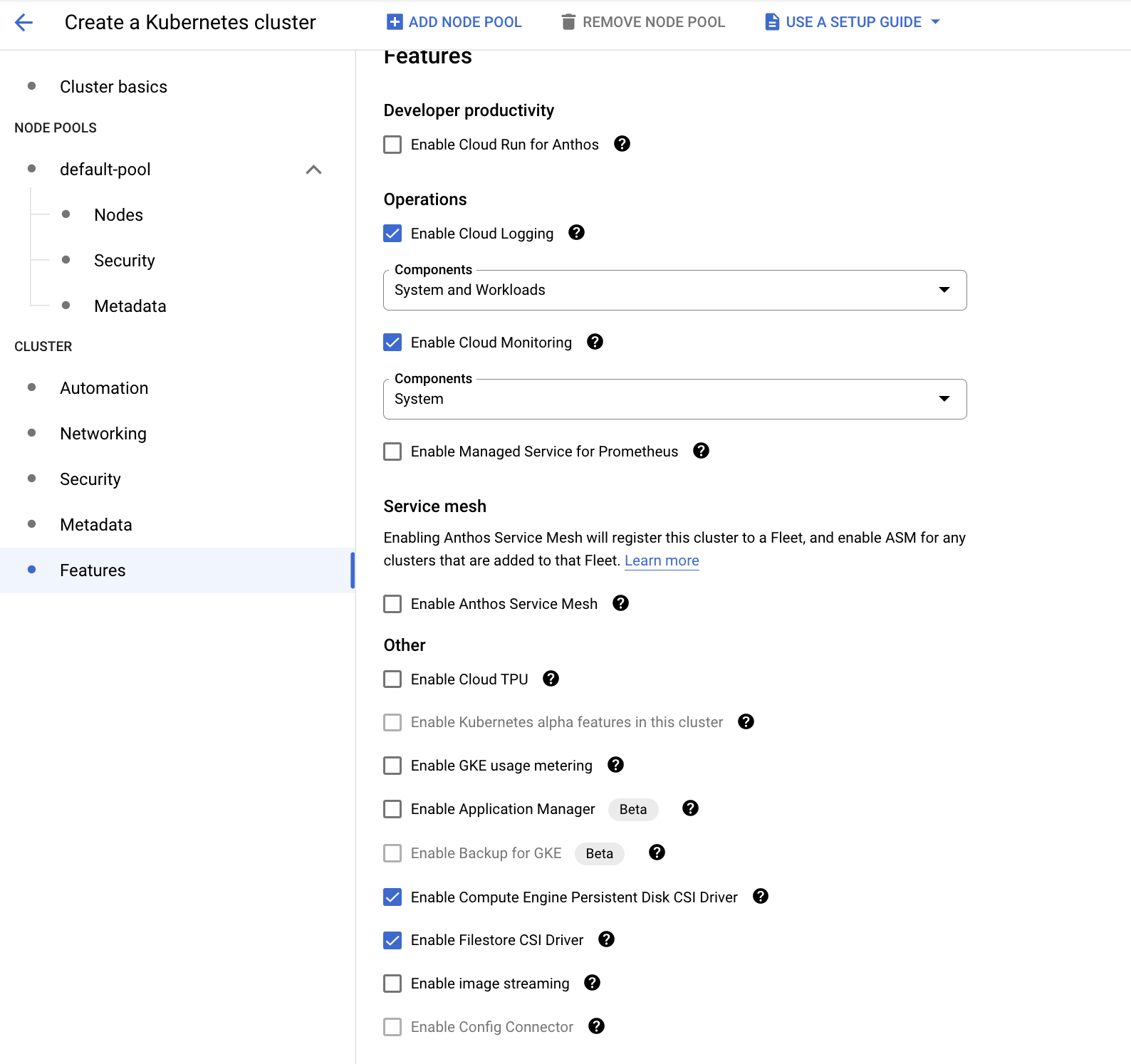

Cluster Features

This section describes GKE cluster functionality, such as monitoring, logging, usage metering, CSI drivers, etc.

Infoworks recommends to complete the following requirements.

- Enable Compute Engine Persistent Disk CSI Driver

- Enable Filestore CSI Driver

- Enable Operations, such as Cloud Logging and Cloud Monitoring

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

© UNIPHORE TECHNOLOGIES 2025 | Confidential